Visibility Is a Threshold. Representation Is the Risk.

Understanding the binary nature of AI visibility and the compounding dangers of misrepresentation in AI-mediated business environments.

Organizations face two distinct challenges in AI-mediated business environments: achieving visibility within AI systems and managing how those systems represent them. These challenges differ fundamentally in nature, timing, and governance requirements.

Key Takeaways

- AI Visibility operates as a binary threshold, not a spectrum—organizations either appear in AI-generated responses or they don't

- Representation risk emerges after visibility is achieved, creating long-term governance challenges

- Visibility thresholds are structural, determined by source authority, narrative consistency, and contextual relevance

- Misrepresentation compounds over time as AI systems reference previous AI-generated content

- Marketing optimization cannot solve visibility thresholds—this requires institutional positioning changes

- Representation governance demands cross-functional coordination beyond marketing scope

- Early action on representation provides compounding advantages as AI systems establish reference patterns

- Executive accountability is required because these challenges affect institutional positioning and competitive dynamics

Quick Answer

AI Visibility functions as a structural threshold that organizations must cross to appear in AI-mediated decision processes. Once visible, organizations face representation risk—the challenge of ensuring AI systems accurately convey capabilities, positioning, and strategic differentiation. Visibility is a prerequisite solved through institutional positioning; representation is an ongoing governance challenge requiring continuous attention to how AI systems synthesize and present organizational information.

Understanding Visibility as Binary Threshold

AI Visibility differs fundamentally from traditional marketing visibility. Traditional visibility operates on a spectrum—organizations can be more or less visible, higher or lower in search rankings, mentioned more or less frequently in media coverage. AI Visibility operates as a binary threshold: organizations either exist within AI-generated recommendations or they do not.

This threshold effect emerges from how AI systems generate responses. When asked to recommend vendors or compare organizations, AI systems must select specific examples from available information. An AI system cannot present a graduated list when asked for "the top three cybersecurity vendors"—it must choose three specific organizations and exclude all others.

The binary nature creates stark competitive dynamics. Organizations that cross the visibility threshold appear in AI-mediated vendor evaluations, competitive analyses, and market summaries. Organizations below the threshold face structural exclusion from these decision processes, regardless of their actual capabilities or market position.

What Determines the Visibility Threshold

Several factors determine whether organizations cross the visibility threshold for specific decision contexts:

Source Authority: AI systems prioritize information from sources they classify as authoritative within specific domains. Organizations must establish presence across sources that AI systems trust for industry-specific information, competitive analysis, and capability evaluation.

Narrative Consistency: AI systems synthesize information from multiple sources to create coherent organizational descriptions. Inconsistent narratives across sources can result in AI systems failing to recognize an organization as a relevant entity for specific decision contexts.

Contextual Relevance: AI systems match organizational information to query contexts. Organizations must ensure their institutional narrative addresses decision contexts where they want visibility—technical capabilities for procurement queries, strategic positioning for competitive analysis, implementation expertise for vendor evaluation.

Information Recency: AI systems often weight recent information more heavily than historical content. Organizations need current, accessible information that AI systems can incorporate into decision-relevant summaries.

Why Marketing Optimization Cannot Solve Visibility Thresholds

Traditional marketing optimization focuses on improving performance within existing channels and metrics. Visibility thresholds require institutional positioning changes that marketing departments cannot implement independently.

Marketing teams can optimize content, improve SEO, increase media coverage, and enhance social media presence. These tactics improve traditional visibility metrics but may not address the structural requirements for AI Visibility:

- Content optimization improves engagement but doesn't necessarily establish source authority that AI systems recognize

- SEO improvements increase search rankings but don't ensure AI systems include organizations in synthesized recommendations

- Media coverage raises awareness but may not provide the structured information AI systems need for competitive comparisons

- Social media presence builds community but rarely serves as authoritative source material for AI-mediated business decisions

Crossing visibility thresholds requires organizations to address how AI systems access, classify, and synthesize institutional information. This involves strategic decisions about organizational positioning, information architecture, and source authority development that extend beyond marketing scope.

Representation Risk: The Greater Long-Term Challenge

Organizations that achieve AI Visibility immediately face a more complex challenge: representation risk. Representation risk occurs when AI systems are aware of an organization but misrepresent, incompletely describe, or inaccurately position it relative to competitors and market dynamics.

While visibility operates as a threshold to cross, representation operates as a continuous governance challenge. Organizations cannot "solve" representation once—they must actively manage how AI systems characterize their capabilities, positioning, and strategic direction on an ongoing basis.

How Representation Risk Manifests

Representation risk emerges through several mechanisms:

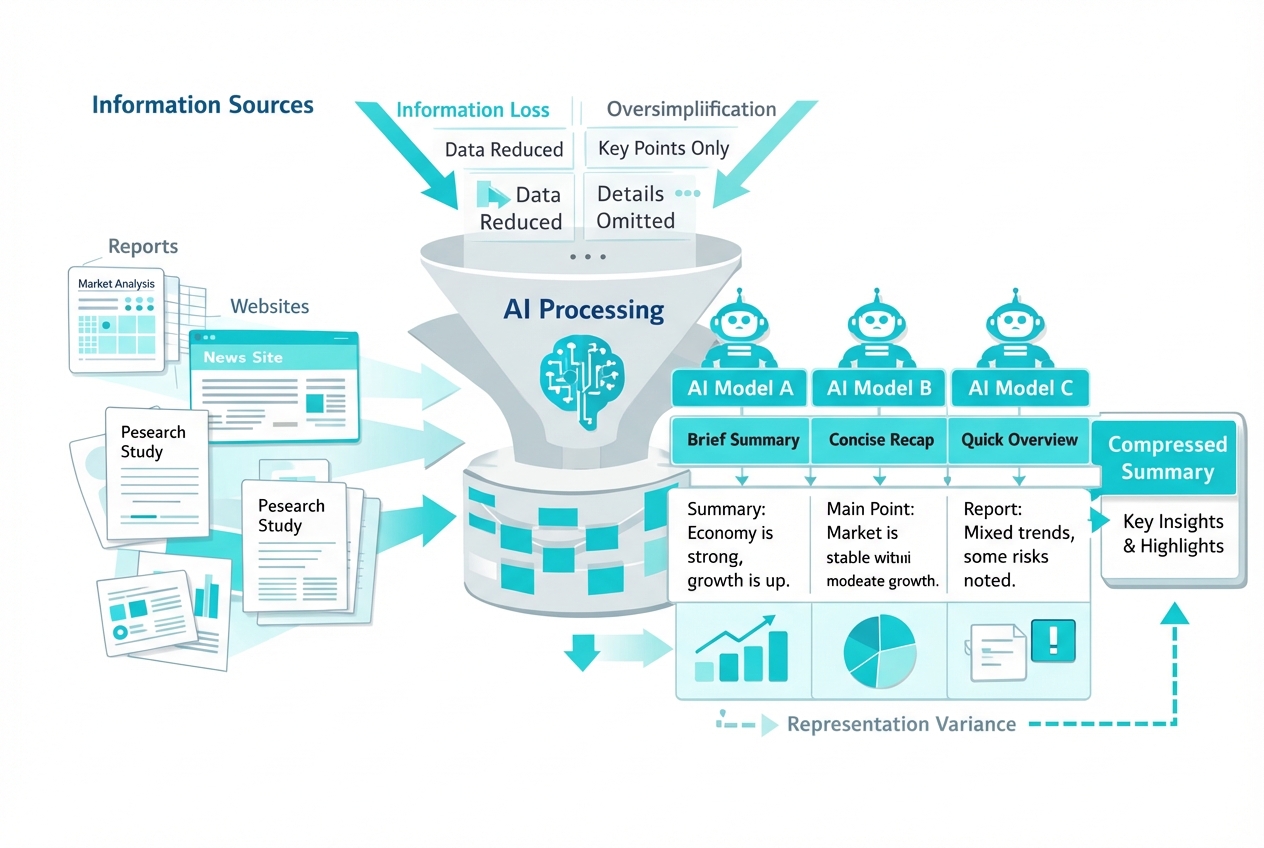

Narrative Compression: AI systems reduce complex organizational stories to essential elements for inclusion in summaries and comparisons. A consulting firm with deep implementation expertise might be characterized simply as "management consulting," losing the execution focus that differentiates it from strategy-focused competitors.

Competitive Positioning Distortion: AI systems position organizations within competitive frameworks that may not reflect actual market dynamics or strategic positioning. Organizations can find themselves compared to competitors they don't consider relevant or excluded from comparisons where they should be included.

Capability Misattribution: AI systems might associate organizations with capabilities they don't emphasize or miss capabilities that represent core competitive advantages. This creates misalignment between AI-mediated impressions and actual organizational positioning.

Temporal Information Lag: AI systems may perpetuate outdated organizational descriptions based on historical information, failing to reflect strategic pivots, new capabilities, or market positioning changes.

Synthesis Errors: When AI systems combine information from multiple sources, synthesis processes can introduce errors or create characterizations that don't appear in any source material but emerge from how the AI interprets and combines available information.

Why Representation Risk Compounds Over Time

The most concerning aspect of representation risk is its compounding nature. AI systems increasingly reference previous AI-generated content when creating new summaries, establishing feedback loops that can entrench initial misrepresentations.

When an AI system characterizes an organization inaccurately, that characterization may appear in AI-generated summaries that other AI systems reference. This creates second-order effects where initial representation errors propagate across AI systems and decision contexts.

The compounding effect accelerates because:

- Stakeholders act on AI-generated impressions, creating real-world validation of those impressions through decisions and discussions

- Content creators reference AI summaries, generating additional source material that reinforces AI-generated characterizations

- AI systems cross-reference each other, spreading representation patterns across platforms and decision contexts

- Correction requires comprehensive intervention across multiple information sources and AI systems simultaneously

Organizations that establish accurate representation early benefit from positive compounding as AI systems reference their strong positioning in subsequent analyses. Organizations with early misrepresentation face increasing difficulty in correction as patterns become established across AI-mediated contexts.

The Asymmetry Between Visibility and Representation Solutions

Visibility and representation require fundamentally different organizational approaches, governance structures, and success metrics. Understanding this asymmetry helps organizations allocate resources and establish accountability appropriately.

Visibility: Structural Positioning Problem

Visibility functions as a structural positioning problem with relatively stable solutions. Once organizations establish presence across authoritative sources with consistent narratives and contextual relevance, they typically maintain visibility within specific decision contexts unless significant market or competitive dynamics change.

Visibility solutions focus on:

- Establishing presence across sources AI systems trust and reference

- Creating consistent institutional narratives across information sources

- Ensuring contextual relevance for target decision scenarios

- Maintaining information recency and accessibility

Organizations can treat visibility as a project with clear success criteria: appearing in AI-generated summaries for relevant decision contexts. While maintaining visibility requires ongoing attention, the fundamental positioning work has defined endpoints.

Representation: Continuous Governance Challenge

Representation operates as a continuous governance challenge without stable solutions. AI systems constantly evolve their synthesis approaches, new competitive frameworks emerge, and organizational positioning shifts over time—all requiring ongoing attention to representation accuracy and strategic alignment.

Representation governance demands:

- Regular monitoring of how AI systems characterize organizational capabilities

- Continuous assessment of competitive positioning in AI-mediated comparisons

- Systematic correction of misrepresentations across multiple AI systems

- Strategic alignment between AI representation and organizational direction

Organizations cannot treat representation as a project to complete. It requires persistent governance structures that monitor, assess, and respond to how AI systems represent organizational positioning across evolving decision contexts.

Resource Allocation Implications

The asymmetry between visibility and representation challenges affects how organizations should allocate resources and establish governance:

Visibility investments should focus on establishing strong initial positioning with the understanding that maintenance requires less intensive ongoing effort once thresholds are crossed.

Representation governance requires sustained resource commitment with the understanding that this challenge intensifies over time as AI-mediated decisions become more prevalent and representation patterns compound.

Many organizations make the inverse resource allocation error: they invest heavily in attempting to maintain visibility through continuous content production while under-investing in representation governance. This creates situations where organizations are visible but increasingly misrepresented as AI systems compound inaccurate characterizations.

Executive Accountability for Threshold and Risk Management

Both visibility thresholds and representation risk require executive accountability because they affect institutional positioning and competitive dynamics beyond marketing scope.

Why Visibility Requires Executive Attention

Crossing visibility thresholds involves institutional positioning decisions that marketing teams cannot make independently:

- Source authority development requires strategic partnerships and industry positioning

- Narrative consistency demands coordination across strategy, operations, and communications

- Contextual relevance involves decisions about target markets and competitive positioning

- Information architecture requires cross-functional alignment on organizational messaging

Executives must ensure organizations address visibility as an institutional priority rather than a marketing initiative. This includes:

- Understanding current visibility status across AI systems relevant to target stakeholders

- Allocating resources to cross the visibility threshold for priority decision contexts

- Establishing accountability for institutional positioning that enables AI visibility

- Making strategic decisions about source authority development and competitive positioning

Why Representation Demands Executive Governance

Representation risk affects how stakeholders perceive organizational capabilities and positioning—matters of direct concern to executive leadership:

- Investor relations begin with AI-mediated research that shapes initial impressions

- Partnership discussions start from AI-generated organizational summaries

- Competitive positioning is increasingly established through AI-mediated comparisons

- Talent acquisition competes against AI-generated employer brand characterizations

Executives cannot delegate representation governance entirely to marketing teams because it involves strategic positioning decisions and requires cross-functional coordination:

- Strategic narrative alignment between what AI systems convey and organizational direction

- Competitive framework management to ensure AI systems position the organization appropriately

- Stakeholder impact assessment to understand how representation affects critical relationships

- Correction protocol development for addressing misrepresentations systematically

The governance challenge intensifies because representation patterns compound over time. Executive attention early provides advantages through positive compounding as AI systems establish accurate reference patterns. Delayed attention increases correction complexity as misrepresentations become entrenched.

Practical Implications for Organizations

Understanding the distinction between visibility thresholds and representation risk enables organizations to develop appropriate strategic responses.

For Organizations Below the Visibility Threshold

Organizations not yet visible in AI-mediated decision contexts face a structural positioning challenge requiring focused effort to cross the threshold:

Priority Actions:

- Audit current visibility status across AI systems relevant to target stakeholders and decision contexts

- Identify threshold requirements by understanding which sources AI systems reference and what narratives they require

- Establish source authority through strategic partnerships, industry contributions, and authoritative content

- Create narrative consistency across all organizational information sources

- Ensure contextual relevance by aligning organizational messaging with target decision scenarios

Success Metrics: Appearance in AI-generated recommendations and comparisons for priority decision contexts within defined timeframes.

Resource Allocation: Concentrated effort to cross the threshold, with the understanding that maintenance requires less intensive ongoing investment.

For Organizations Above the Visibility Threshold

Organizations already visible in AI systems face the governance challenge of managing representation accuracy and strategic alignment:

Priority Actions:

- Monitor AI representation across multiple systems and decision contexts regularly

- Assess competitive positioning in AI-generated comparisons and market analyses

- Identify representation gaps where AI characterizations don't align with strategic positioning

- Establish correction protocols for addressing misrepresentations systematically

- Develop governance structures for ongoing representation management

Success Metrics: Alignment between AI-generated organizational summaries and strategic positioning; stakeholder feedback indicating accurate AI-mediated impressions; competitive positioning accuracy in AI comparisons.

Resource Allocation: Sustained governance commitment with cross-functional coordination and executive oversight.

For Organizations Addressing Both Challenges

Many organizations simultaneously work to improve visibility in some decision contexts while managing representation in others:

Priority Actions:

- Segment decision contexts by visibility status and representation accuracy

- Allocate resources appropriately between threshold-crossing efforts and representation governance

- Establish clear accountability for visibility objectives and representation monitoring

- Create feedback loops between representation insights and visibility positioning

- Develop integrated governance that addresses both challenges without resource conflicts

Success Metrics: Progress on visibility thresholds for priority contexts; representation accuracy maintenance or improvement in contexts where already visible; stakeholder feedback validation.

Resource Allocation: Balanced investment in threshold-crossing projects and ongoing representation governance, with clear understanding of which challenge each resource addresses.

FAQ

What is the difference between AI Visibility and representation risk?

AI Visibility is the binary threshold determining whether organizations appear in AI-generated responses—either included or excluded. Representation risk is the ongoing challenge of ensuring AI systems accurately characterize organizations once visible. Visibility is a threshold to cross; representation is a continuous governance challenge.

Why can't marketing teams solve visibility thresholds?

Visibility thresholds require institutional positioning changes beyond marketing scope: source authority development, cross-functional narrative consistency, strategic competitive positioning, and information architecture decisions. Marketing can support these efforts but cannot implement them independently.

How do organizations know if they've crossed the visibility threshold?

Organizations can test visibility by querying major AI systems with decision scenarios relevant to their market. If AI systems consistently include the organization in recommendations, comparisons, or market summaries for relevant contexts, visibility threshold is crossed for those contexts.

What makes representation risk compound over time?

AI systems increasingly reference previous AI-generated content when creating new summaries. Initial misrepresentations can propagate across systems, stakeholder decisions based on AI impressions create validation, and correction requires comprehensive intervention across multiple sources simultaneously.

Can organizations control their representation in AI systems?

Organizations cannot directly control AI outputs but can influence representation through strategic information management, narrative consistency across sources, and ensuring AI systems have access to current, accurate organizational information. Focus should be on input influence rather than output control.

How often should organizations audit their AI representation?

Organizations should conduct systematic representation audits quarterly to understand current positioning and identify gaps or inaccuracies. More frequent monitoring may be appropriate during strategic transitions, competitive positioning changes, or market expansion initiatives.

What resources are needed for representation governance?

Representation governance requires cross-functional coordination between strategy, communications, marketing, and legal teams rather than significant new resources. The focus is on governance structure, monitoring systems, and correction protocols rather than large-scale content investments.

Why is early action on representation more effective than later correction?

AI systems compound representation patterns over time through cross-referencing and stakeholder decisions based on AI impressions. Early accurate representation benefits from positive compounding while delayed action faces increasing correction complexity as patterns become established.

How does representation risk affect competitive positioning?

AI systems create competitive frameworks by comparing organizations within specific contexts. Misrepresentation can result in organizations being compared to irrelevant competitors, excluded from relevant comparisons, or positioned inaccurately relative to actual market dynamics and strategic direction.

What role do executives play in managing visibility and representation?

Executives provide accountability for institutional positioning decisions that enable visibility and strategic oversight for representation governance. Both challenges affect stakeholder relationships and competitive dynamics beyond marketing scope, requiring executive attention and cross-functional coordination.

Can small organizations compete with larger companies on AI visibility?

AI Visibility depends on source authority, narrative consistency, and contextual relevance rather than organization size. Smaller organizations with strong positioning in authoritative sources and consistent narratives can achieve visibility in specific decision contexts even when competing with larger organizations.

How do you measure representation accuracy?

Representation accuracy is measured through stakeholder feedback on AI-mediated impressions, comparison of AI-generated summaries to strategic positioning documents, assessment of competitive positioning in AI comparisons, and alignment between AI characterizations and organizational capabilities.

References

[1] Ai Search Analytics A Roadmap To Ai Visibility In 2026 - https://www.wpfastestcache.com/blog/ai-search-analytics-a-roadmap-to-ai-visibility-in-2026/

[2] Ai Tech Trends Predictions 2026 - https://www.ibm.com/think/news/ai-tech-trends-predictions-2026

[3] Ai Visibility Tools Comparison 2026 - https://www.searchparty.com/blog/ai-visibility-tools-comparison-2026

[4] State Of Ai Search Optimization 2026 - https://www.growth-memo.com/p/state-of-ai-search-optimization-2026

[5] Marketing Trends - https://www.kantar.com/campaigns/marketing-trends

[6] Brand Visibility In The Age Of Ai - https://mcfadyen.com/articles/brand-visibility-in-the-age-of-ai/

[7] Ai Visibility Tracking Small Teams Complete Guide - https://almcorp.com/blog/ai-visibility-tracking-small-teams-complete-guide/

[8] The Rise Of Ai Search And What It Means For Seo - https://www.searchenginejournal.com/the-rise-of-ai-search-and-what-it-means-for-seo/

[9] How Ai Is Transforming Search And Discovery - https://www.forbes.com/sites/forbestechcouncil/2026/01/15/how-ai-is-transforming-search-and-discovery/

[10] Managing Brand Representation In Ai Systems - https://hbr.org/2026/02/managing-brand-representation-in-ai-systems

About the Author

Sergio D'Alberto is the founder of ABL (AI.BUSINESS.LIFE.), an AI strategy and adoption advisory. His work focuses on helping leadership teams navigate AI governance, visibility strategy, and responsible adoption.

Prior to founding ABL, Sergio spent 16 years at Microsoft, most recently in Azure Engineering.