The Representation Risk in AI: Why Inaccurate AI Descriptions of Your Business Compound Over Time

Being visible to AI systems is not enough. Inaccurate AI representations of your business create compounding stakeholder harm. This essay explains the mechanics of representation risk and how to address it before the damage becomes structural.

Most organizations that discover AI systems mention them feel a degree of relief. They exist. They are visible. The concern about AI invisibility, at least, does not apply to them. What many do not realize is that being visible in AI systems with an inaccurate description can be more damaging than not being mentioned at all.

Key Takeaways

- Representation risk is distinct from visibility risk — an organization can be visible and still be systematically misrepresented

- AI systems compress complex organizational narratives into summaries that lose nuance, strategic positioning, and competitive differentiation

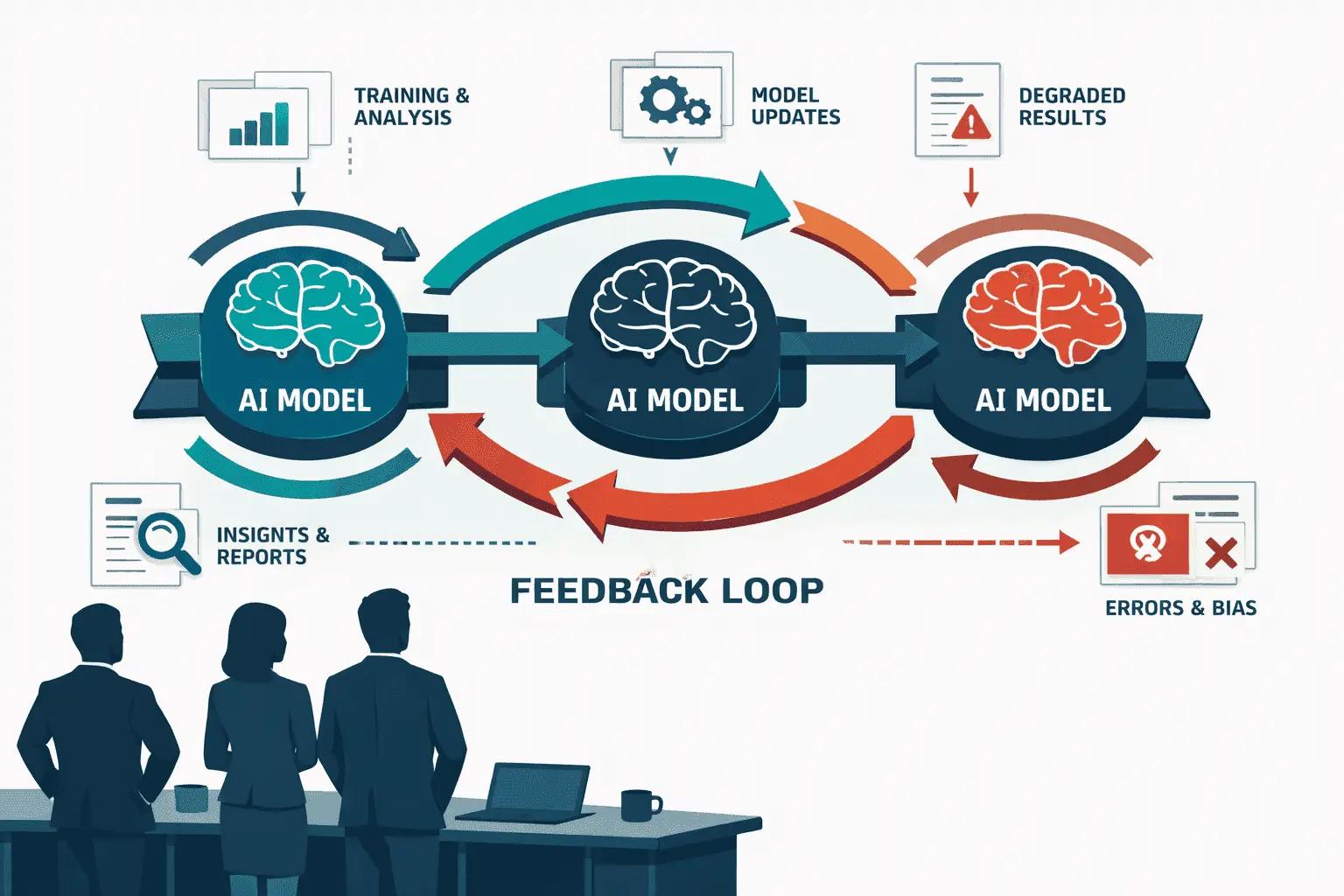

- Misrepresentation compounds over time because AI systems reference previous AI-generated content, creating feedback loops that entrench inaccuracies

- The four main misrepresentation failure modes are: outdated characterization, category misattribution, capability compression, and competitive misplacement

- Representation errors affect different stakeholder categories differently — what misleads a prospective customer differs from what misleads an investor or a potential hire

- Correcting established misrepresentation is significantly more difficult than establishing accurate representation from the start

- The source of misrepresentation is usually upstream content, not the AI system itself — fixing the AI output requires fixing the input sources

- Organizations that monitor AI representation proactively can intervene before inaccuracies become structurally embedded

Quick Answer

Representation risk is the condition in which an organization is present in AI-generated summaries but described inaccurately, incompletely, or in ways that do not align with its actual strategic positioning. Because AI systems frequently reference previous AI-generated content as source material, initial misrepresentations propagate and amplify over time. An organization described as a "small consulting firm" when it has scaled to enterprise services, or characterized as "focused on SMBs" when it has shifted upmarket, will carry those inaccurate descriptions into every AI-mediated stakeholder interaction until the underlying sources are corrected.

Understanding Representation Risk as Distinct from Visibility Risk

The AI visibility conversation has focused primarily on presence: do AI systems mention your organization when relevant? This is the right starting question. But passing the visibility threshold introduces a second, more complex challenge.

Visibility without accuracy is potentially worse than absence. A prospective customer who receives no AI-generated information about your organization may seek additional research. A prospective customer who receives confidently stated but inaccurate information about your organization forms an impression that is difficult to correct in subsequent human engagement.

The distinction matters for governance. Solving visibility requires building presence in the right sources and communities. Solving representation risk requires auditing what AI systems say when they do mention you — and systematically correcting the upstream sources driving inaccurate outputs.

These are different problems requiring different interventions. Organizations that conflate them apply visibility solutions to representation problems and wonder why AI descriptions remain inaccurate despite increased content output.

The Four Primary Misrepresentation Failure Modes

AI misrepresentation of businesses tends to follow consistent patterns. Understanding these patterns helps organizations identify where their own representation is most at risk.

Outdated Characterization

AI systems are trained on historical data and retrieve content from sources that were indexed at various points in time. An organization that has undergone significant strategic transformation — a pivot, a market expansion, a rebrand, a merger, or a capability addition — may be accurately described based on what it was two or three years ago, not what it is today.

The typical scenario: a software company that started in the SMB market and successfully moved upmarket to enterprise is still described by AI systems as "an SMB-focused tool." The older content characterizing the company's SMB origins is more voluminous, more widely referenced, and more deeply embedded in AI training data than the newer content describing the enterprise positioning.

Outdated characterization is the most common form of representation risk and the one that most directly undermines sales and partnership conversations. When AI-mediated first impressions don't match what a company actually is, the gap creates friction that sales teams must spend time resolving.

Category Misattribution

AI systems must place organizations into categories to make them comparable and searchable. Category assignment is based on the language most commonly associated with the organization across indexed sources. When that language is ambiguous, inconsistent, or dated, category assignment errors occur.

The typical scenario: a consultancy that specializes in AI governance is categorized by AI systems as a "digital transformation consultancy" because the term "digital transformation" appears more frequently in their older content than the more specific "AI governance" language they have adopted recently. In AI-mediated searches for AI governance specialists, the firm may not appear.

Category misattribution is particularly damaging for organizations in emerging fields where the category language itself is evolving. Organizations that fail to consistently use the right category language across all content sources may find themselves mapped to adjacent but less relevant categories.

Capability Compression

AI systems compress complex organizational capability sets into brief summaries. This compression is inevitable — AI responses to broad questions cannot include full service catalogs. The risk is that compression removes the specific differentiators that make an organization competitively distinct.

The typical scenario: a professional services firm with deep expertise in pharmaceutical regulatory compliance is described by AI systems as "a compliance consulting firm." The pharmaceutical specialization — which is the firm's primary source of competitive advantage and the reason clients hire them — does not survive compression. In AI-mediated comparisons with other compliance firms, the differentiator is invisible.

Capability compression is difficult to prevent entirely, but it can be managed. Organizations that make their specific differentiators explicit, quotable, and structurally prominent in their content — rather than embedded in marketing prose — give AI systems better material to preserve through compression.

Competitive Misplacement

AI systems frequently generate competitive comparisons and positioning analyses. These are among the most consequential AI outputs for organizational strategy, because they shape how analysts, investors, and strategic partners frame the competitive landscape.

The typical scenario: an enterprise security firm is consistently placed by AI systems in competitive comparisons with consumer-grade security products, because early coverage of the firm appeared in consumer technology publications before its enterprise pivot. In enterprise buyer contexts, being compared to consumer products rather than enterprise peers damages credibility before any direct engagement.

Competitive misplacement often reflects the historical sources that gave an organization its early coverage — sources that no longer represent its actual market position.

How Misrepresentation Compounds

The compounding mechanism is the reason representation risk is a governance priority rather than a maintenance task.

AI systems increasingly cite and reference other AI-generated content. When a summary generated by one AI system is published online — in a blog post, a product comparison article, an AI-generated market overview — it becomes source material for subsequent AI training and retrieval. Inaccurate characterizations in one AI output propagate into others.

The cycle operates as follows: an AI system generates an inaccurate description based on outdated source material. That description appears in AI-assisted content published online. Other AI systems retrieve and synthesize that published content. The inaccuracy is now present in a secondary source that carries its own authority weight. Subsequent AI training incorporates both the original source and the secondary source, reinforcing the inaccurate characterization.

Each cycle makes correction more resource-intensive. The organization must now correct not only the original upstream sources but also the derivative content those sources generated. In markets with active AI-assisted content production — where competitor analyses, market overviews, and vendor comparisons are regularly generated with AI assistance — misrepresentations can propagate to dozens of secondary sources within months.

The practical implication: organizations that identify and address representation inaccuracies early face a correction effort orders of magnitude smaller than organizations that wait until the inaccuracy is embedded across a wide source ecosystem.

Stakeholder-Specific Representation Impact

Misrepresentation affects different stakeholder categories through different mechanisms. A comprehensive governance approach requires understanding which misrepresentations affect which audiences.

Prospective customers encounter AI misrepresentation during vendor research. Category misattribution means they don't find your organization in relevant searches. Outdated characterization means their initial impression is of a company you no longer are. Capability compression means your differentiators don't appear in initial comparisons.

Investors and analysts use AI systems for initial market landscape research. Competitive misplacement means your organization appears in the wrong peer group. Outdated characterization means growth or transformation is invisible. Category misattribution means you are absent from emerging market analyses where you should be present.

Potential hires increasingly use AI tools to research employers before applying. Capability compression that obscures the sophistication of your work affects the quality of candidates who self-select in. Outdated characterization of your culture or scale shapes candidate expectations that do not match reality.

Strategic partners evaluate potential collaborations using AI-assisted research. Competitive misplacement relative to their own positioning affects their initial assessment of fit. Category misattribution may mean they don't identify you as a relevant partner at all.

Correcting Representation: Working Upstream

The critical governance principle for representation risk is: AI outputs cannot be corrected by addressing the AI directly. AI systems generate outputs based on the sources they have accessed. Changing AI outputs requires changing the sources those systems use.

For outdated characterization: the correction requires publishing sufficient current content across authoritative sources — including press coverage, analyst briefings, structured website data, and industry database entries — to shift the recency and volume balance toward current positioning. A press release alone is insufficient. The correction requires a coordinated update across multiple sources that AI systems reference with high weight.

For category misattribution: the correction requires consistent language adoption across all organizational content. If the correct category term does not appear in your website, in press coverage, in analyst reports, and in community discussions, AI systems will continue to use the category language that does appear. Consistency across all sources is what shifts category assignment.

For capability compression: the correction requires making differentiators explicitly, specifically, and repeatedly visible in quotable form. Generic marketing language does not survive AI compression. Specific, quantified, standalone statements about capabilities do.

For competitive misplacement: the correction requires changing which competitive contexts your organization appears in. This often means generating coverage in enterprise or industry publications where your actual peers appear, rather than relying on older coverage in publications associated with your previous market position.

FAQ

What is the difference between representation risk and visibility risk?

Visibility risk is the risk of not being mentioned by AI systems when relevant. Representation risk is the risk of being mentioned inaccurately. Both require attention, but they require different interventions. Visibility is about presence; representation is about accuracy.

How do we know if AI systems are misrepresenting our organization?

Query major AI systems with the questions your stakeholders would ask: "what does [company name] do," "who are the competitors of [company name]," "what type of clients does [company name] serve." Compare the responses to your actual strategic positioning. The gaps between those responses and accurate positioning define your representation risk.

Can we contact AI companies to correct misrepresentations?

Major AI companies have feedback mechanisms, but they operate at scale and are not designed for individual business corrections. The more reliable approach is to correct the upstream sources AI systems reference. Changing the source material changes the outputs.

How long does it take for source corrections to affect AI outputs?

Variable and unpredictable. AI training data has update cycles that vary by system. Retrieval-based AI systems that access live web content may update representations faster than systems relying primarily on training data. In general, organizations should expect months rather than weeks for source corrections to propagate into changed AI outputs.

Is representation risk more serious for smaller or larger organizations?

Both face different forms. Smaller organizations often have thin source ecosystems, meaning a single inaccurate characterization dominates AI representation. Larger organizations face the compounding problem more acutely — more sources, more derivative content, and more complex correction requirements when misrepresentation is identified.

Should we monitor AI representation regularly?

Yes. Quarterly audits of major AI platforms using stakeholder-perspective queries provide a baseline. The goal is to identify representation drift before it compounds. Events that should trigger immediate audits include: strategic pivots, major product launches, acquisitions, leadership changes, and significant press coverage.

What internal function should own representation risk monitoring?

Representation risk monitoring requires someone with visibility into actual organizational strategy — what the company is, where it is going, and what it wants to be known for. This typically sits above marketing, closer to strategy or executive communications. The monitoring function must be able to identify gaps between AI representation and strategic reality, not just gaps in content production.

References

[1] Ai Visibility And Brand Representation - https://mcfadyen.com/articles/brand-visibility-in-the-age-of-ai/

[2] Ai Tech Trends Predictions 2026 - https://www.ibm.com/think/news/ai-tech-trends-predictions-2026

[3] State Of Ai Search Optimization 2026 - https://www.growth-memo.com/p/state-of-ai-search-optimization-2026

[4] How Ai Synthesizes Information From Multiple Sources - https://www.contentatscale.ai/blog/ai-content-synthesis/

[5] Generative Engine Optimization Geo Strategies - https://www.siegemedia.com/strategy/generative-engine-optimization

[6] Ai Search Analytics A Roadmap To Ai Visibility In 2026 - https://www.wpfastestcache.com/blog/ai-search-analytics-a-roadmap-to-ai-visibility-in-2026/

[7] The Rise Of Ai Search And What It Means For Seo - https://www.searchenginejournal.com/the-rise-of-ai-search-and-what-it-means-for-seo/

[8] Measuring Success In The Age Of Ai Search - https://www.conductor.com/blog/measuring-ai-search-success/

[9] Ai Visibility Tools Comparison 2026 - https://www.searchparty.com/blog/ai-visibility-tools-comparison-2026

[10] Zero Click Searches The Future Of Seo - https://moz.com/blog/zero-click-searches-future-of-seo

About the Author

Sergio D'Alberto is the founder of ABL (AI.BUSINESS.LIFE.), an AI strategy and adoption advisory. His work focuses on helping leadership teams navigate AI governance, visibility strategy, and responsible adoption.

Prior to founding ABL, Sergio spent 16 years at Microsoft, most recently in Azure Engineering.