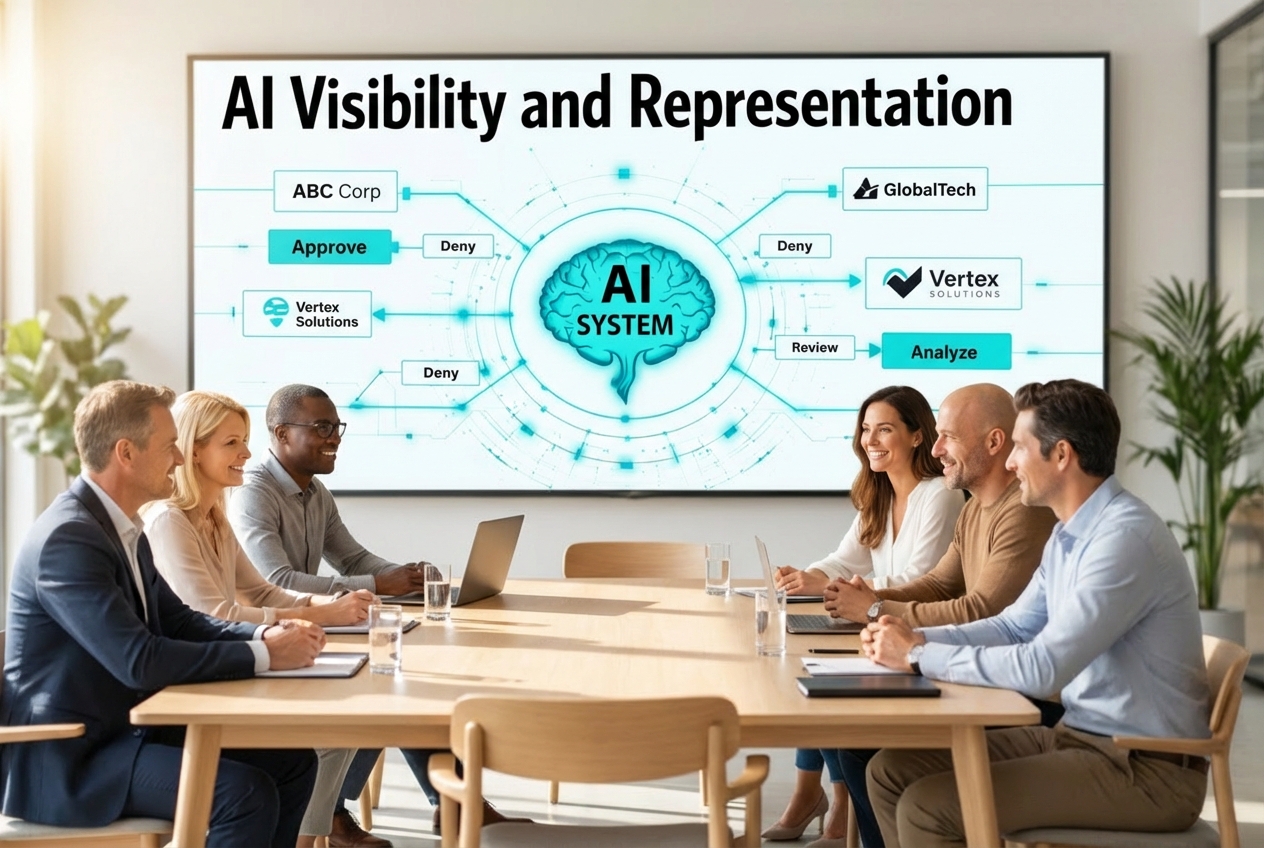

AI Visibility and Representation: The Executive Discipline for AI-Mediated Business Decisions

As AI systems mediate business decisions affecting all stakeholder relationships, AI Visibility and Representation requires board-level governance beyond traditional marketing scope.

Key Takeaways

- AI systems increasingly mediate vendor selection, comparison, and first-touch research, creating structural visibility thresholds

- AI Visibility is not SEO optimization but governance of organizational representation in AI-mediated decision paths

- Representation risk emerges when companies are visible but misrepresented in AI-generated summaries and recommendations

- This is a board-level governance issue requiring executive accountability, not a marketing channel optimization

- Over 70% of consumers now rely on AI-driven search experiences, fundamentally reshaping business discovery

- Approximately 60% of searches end without clicks, making traditional visibility metrics inadequate

- Acting later increases irreversibility as AI systems compound representation patterns over time

- Decision-system exposure requires institutional positioning strategies beyond traditional marketing approaches

Quick Answer

AI Visibility and Representation is an emerging executive discipline focused on governing how organizations appear inside AI-mediated decision systems. As AI systems become intermediaries in vendor selection and business research, companies face structural exclusion if invisible and representation risk if misrepresented. This requires board-level governance because marketing cannot address the institutional positioning needed for AI-mediated business decisions.

Why AI Systems Are Becoming Business Decision Intermediaries

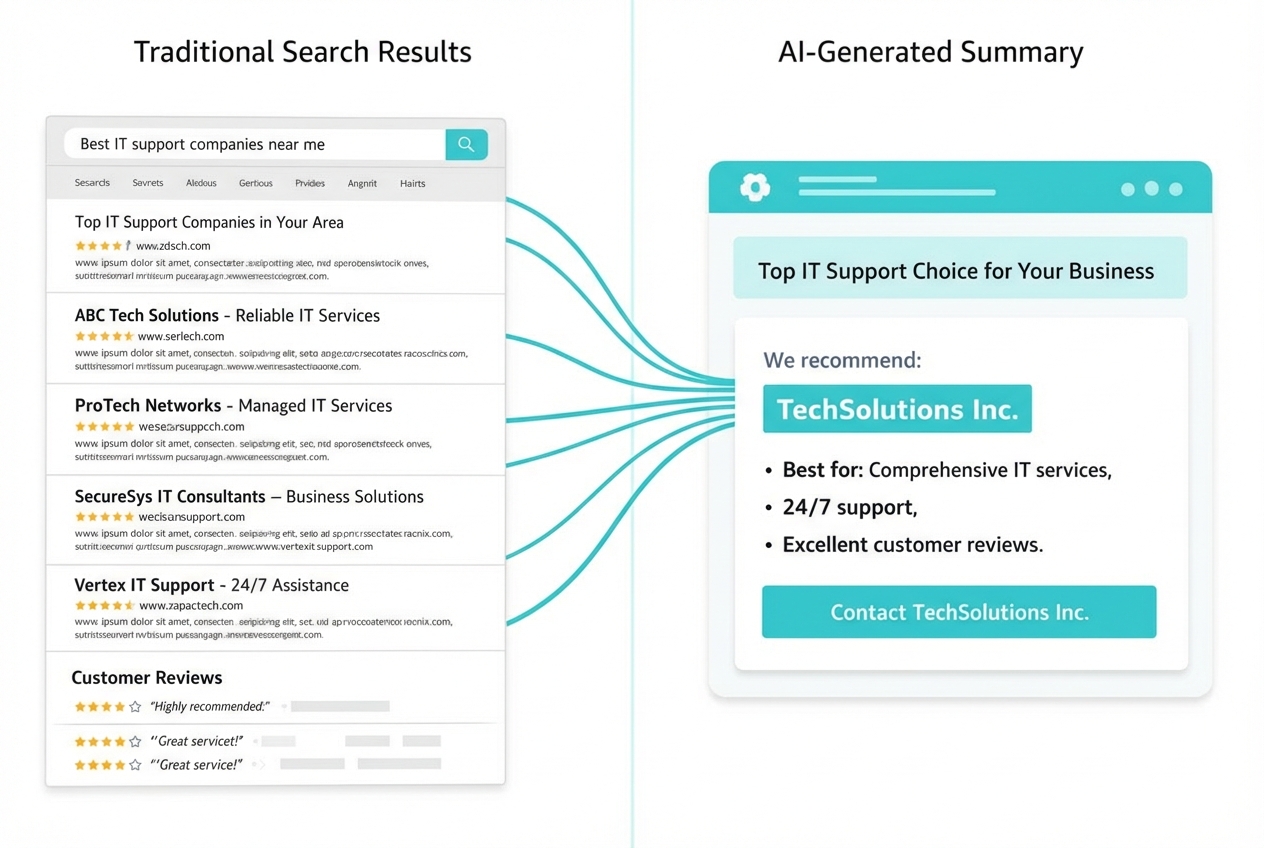

AI systems now sit between companies and their potential customers, partners, and stakeholders in ways that fundamentally alter business discovery. When a CEO asks an AI system to "summarize the top three vendors for enterprise security," that system becomes the gatekeeper determining which companies receive consideration.

This shift represents more than technological advancement. AI intermediaries compress entire market landscapes into digestible summaries, making visibility decisions that were previously distributed across multiple touchpoints and human research processes. The AI system selects sources, weighs information, and presents conclusions that shape initial impressions and decision frameworks.

Consider how this changes vendor evaluation. Previously, a procurement team might research ten potential vendors through websites, analyst reports, and peer recommendations. Now, an AI system synthesizes that research into a comparative summary featuring three recommended options. Companies not included in that summary face structural exclusion from the decision process.

The intermediary role extends beyond simple search replacement. AI systems increasingly handle:

- Initial vendor screening based on criteria matching and capability assessment

- Competitive comparisons that position companies relative to alternatives

- Risk assessment summaries that influence stakeholder confidence

- Implementation guidance that affects vendor selection timing

This intermediation creates new dependency relationships. Organizations must ensure their institutional narrative reaches AI systems in formats those systems can process and represent accurately. Traditional marketing channels that relied on direct human engagement no longer guarantee decision-maker awareness.

The speed of AI-mediated research amplifies these effects. Where human research might take days or weeks, AI systems generate comprehensive vendor analyses in minutes. This compression of research timelines means companies have fewer opportunities to influence perception after initial AI-generated impressions form.

Understanding AI Visibility as Structural Threshold

AI Visibility represents the fundamental requirement for organizational presence within AI-mediated decision systems. Unlike traditional marketing visibility, which operates as a spectrum of awareness levels, AI Visibility functions as a binary threshold: organizations either exist within AI decision frameworks or they do not.

This threshold effect occurs because AI systems must select specific sources and examples when generating responses. An AI system summarizing "leading companies in sustainable manufacturing" will include certain organizations and exclude others, creating clear inclusion boundaries rather than graduated visibility levels.

The structural nature of this threshold distinguishes AI Visibility from search engine optimization or content marketing. Traditional SEO aims to improve ranking positions within search results that users can scroll through and evaluate. AI Visibility determines whether an organization appears in AI-generated summaries that users typically accept without further research.

Key characteristics of the AI Visibility threshold include:

Source Authority Requirements: AI systems prioritize information from sources they classify as authoritative. Organizations must establish presence across sources that AI systems trust and reference regularly.

Narrative Consistency Demands: AI systems synthesize information from multiple sources to create coherent summaries. Inconsistent organizational narratives across sources can result in confused or incomplete representation.

Contextual Relevance Matching: AI systems match organizational information to specific query contexts. Companies must ensure their institutional narrative addresses the decision contexts where they want visibility.

Temporal Recency Weighting: AI systems often prioritize recent information when generating responses. Organizations need current, accessible information that AI systems can incorporate into decision summaries.

The threshold creates competitive dynamics where incremental improvements in traditional marketing metrics may not translate to AI Visibility gains. Organizations either meet the criteria for inclusion in AI-generated recommendations or they remain structurally invisible to AI-mediated decision processes.

This binary nature requires different strategic approaches than traditional visibility optimization. Rather than optimizing for marginal ranking improvements, organizations must ensure they cross the fundamental threshold for AI system recognition and inclusion in decision-relevant contexts.

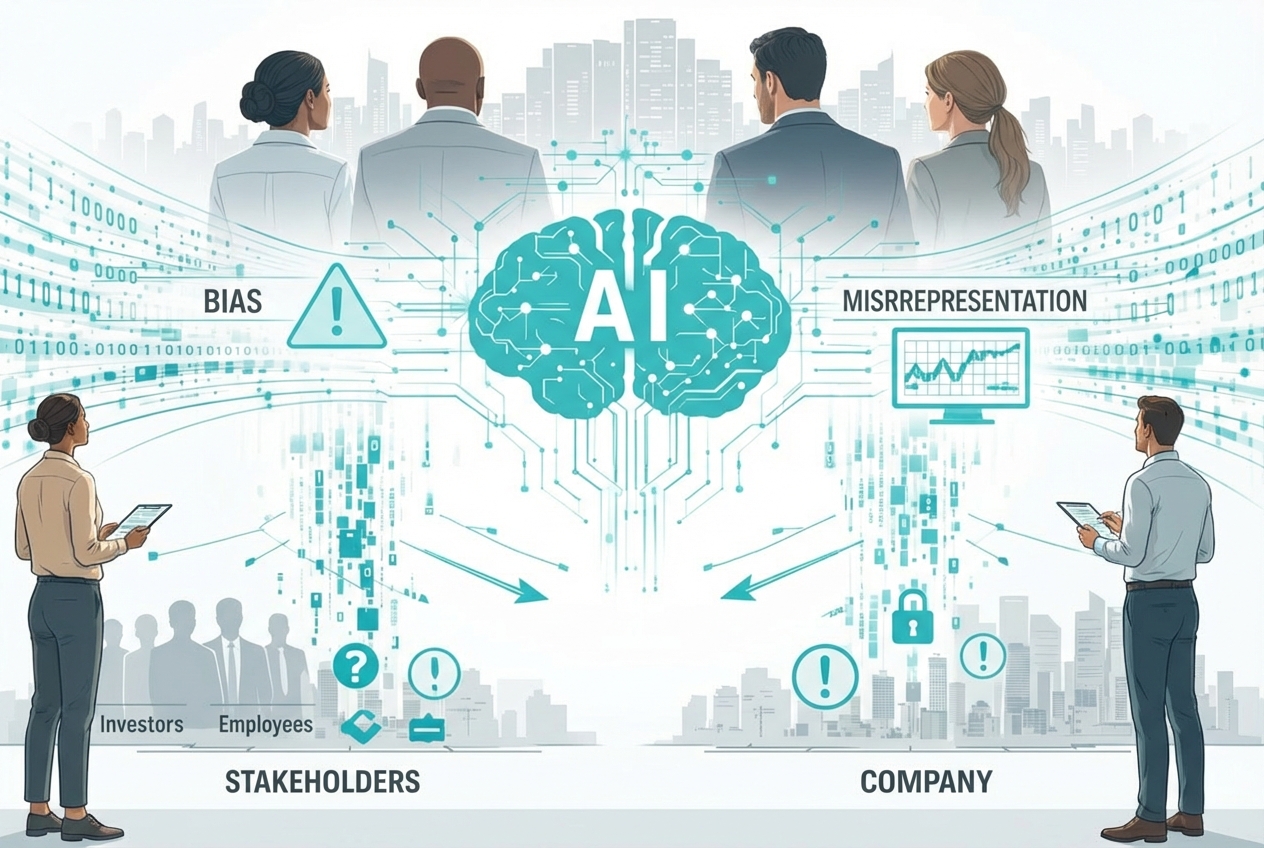

Representation Risk: The Greater Long-Term Governance Challenge

While achieving AI Visibility addresses the threshold challenge, representation risk emerges as the more complex long-term governance issue. Representation risk occurs when organizations are visible within AI systems but are misrepresented, incompletely described, or positioned inaccurately relative to their actual capabilities and strategic positioning.

AI systems compress complex organizational narratives into simplified summaries, creating inevitable information loss and potential distortion. A company with diverse capabilities might be characterized by AI systems based on its most frequently mentioned or easily categorized services, missing strategic differentiators or emerging capabilities.

The compression process introduces several representation vulnerabilities:

Narrative Compression: AI systems reduce complex company stories to essential elements, potentially losing strategic nuances that influence decision-maker preferences. A consulting firm known for implementation excellence might be described simply as "management consulting," missing the execution focus that differentiates it from strategy-focused competitors.

Competitive Positioning Distortion: AI systems may position companies within competitive frameworks that don't reflect actual market dynamics or strategic positioning. Organizations can find themselves compared to competitors they don't consider relevant or missing from comparisons where they should be included.

Capability Misattribution: AI systems might associate organizations with capabilities they don't emphasize or miss capabilities that represent core competitive advantages. This misattribution can direct inappropriate opportunities while missing relevant ones.

Temporal Lag in Strategic Evolution: AI systems may perpetuate outdated organizational descriptions based on historical information, failing to reflect strategic pivots, new capabilities, or market positioning changes.

The governance challenge intensifies because representation patterns compound over time. AI systems often reference previous AI-generated summaries, creating feedback loops that can entrench misrepresentations or incomplete narratives. Once an inaccurate characterization becomes established across AI systems, correcting it requires systematic intervention across multiple information sources.

Representation risk affects different organizational stakeholders in distinct ways:

- Sales teams encounter prospects with preformed impressions based on AI-generated company summaries

- Partnership discussions begin with AI-informed understanding of organizational capabilities

- Investor relations must address AI-mediated research that shapes initial investment interest

- Talent acquisition competes against AI-generated employer brand summaries

The strategic implications extend beyond marketing concerns to fundamental questions of institutional identity and market positioning. Organizations must govern how their strategic narrative appears across AI-mediated contexts while maintaining authentic positioning that aligns with actual capabilities.

Why This Demands Board-Level Governance

AI Visibility and Representation transcends traditional marketing and communications scope, requiring board-level governance because it affects fundamental organizational positioning and competitive dynamics. The structural nature of AI-mediated decision systems creates institutional risks that marketing departments cannot address independently.

Institutional positioning within AI systems affects organizational relationships across all stakeholder categories, from customers and partners to investors and regulators. When AI systems become the primary interface for initial organizational research, the governance of that representation becomes a core institutional responsibility rather than a marketing channel optimization.

The board-level implications include several critical areas:

Strategic Risk Management: Misrepresentation in AI systems can direct inappropriate opportunities while missing relevant ones, affecting revenue pipeline and strategic development. Organizations may find themselves pursuing opportunities based on how AI systems characterize their capabilities rather than their actual strategic direction.

Competitive Positioning Authority: AI systems increasingly influence how organizations are positioned relative to competitors. This competitive framing affects market perception, partnership opportunities, and strategic positioning in ways that extend far beyond marketing communications.

Stakeholder Relationship Management: Investors, partners, and other stakeholders increasingly rely on AI-mediated research for initial organizational assessment. Board members must understand how AI systems represent the organization to these critical relationships.

Regulatory and Compliance Considerations: As AI systems influence business decisions, the accuracy of organizational representation in those systems may become subject to regulatory scrutiny, particularly in regulated industries where misrepresentation carries compliance implications.

The governance requirement emerges because effective AI Visibility and Representation requires cross-functional coordination that marketing departments cannot orchestrate independently. Institutional narrative consistency demands alignment between strategy, operations, communications, and legal functions to ensure AI systems receive coherent organizational information.

Executive accountability becomes essential because AI Visibility and Representation affects organizational positioning at the institutional level. Marketing teams can optimize content and communications, but they cannot make the strategic decisions about organizational positioning and narrative consistency that AI representation requires.

The irreversibility factor amplifies the governance imperative. AI systems compound representation patterns over time, making early intervention more effective than later correction efforts. Boards that defer AI Visibility and Representation governance may find correction efforts more complex and less effective as AI-mediated impressions become established across stakeholder groups.

The Irreversibility of Delayed Action

The temporal dynamics of AI system learning and information propagation create increasing irreversibility for organizations that delay AI Visibility and Representation governance. Unlike traditional marketing initiatives that can be adjusted incrementally, AI-mediated representation patterns compound and reinforce over time, making later interventions more complex and less effective.

AI systems often reference previous AI-generated content when creating new summaries, establishing feedback loops that entrench initial representations. An organization initially characterized inaccurately by AI systems may find that characterization repeated and reinforced across subsequent AI-generated summaries, creating persistent misrepresentation that becomes increasingly difficult to correct.

The compounding effect occurs through several mechanisms:

Cross-System Information Propagation: AI-generated summaries become source material for other AI systems, spreading initial characterizations across multiple platforms and decision contexts. An incomplete company description in one AI system can propagate to others, creating widespread representation consistency around inaccurate narratives.

User Behavior Reinforcement: Stakeholders who encounter AI-generated organizational summaries may create content or make decisions based on those summaries, generating additional source material that reinforces the initial AI characterization. This human-AI feedback loop can entrench misrepresentations through seemingly independent validation.

Competitive Positioning Calcification: As AI systems establish competitive frameworks and organizational comparisons, those frameworks become reference points for subsequent analysis. Organizations may find themselves permanently positioned within competitive contexts that don't reflect their strategic direction or actual market position.

Stakeholder Expectation Formation: Investors, customers, and partners who rely on AI-mediated research develop expectations based on AI-generated organizational summaries. These expectations influence relationship dynamics and opportunity development in ways that persist beyond the correction of AI representations.

The strategic implications of delay include:

- Increased correction complexity as misrepresentations become established across multiple AI systems and stakeholder groups

- Competitive disadvantage accumulation as well-represented competitors gain systematic advantages in AI-mediated discovery

- Stakeholder relationship friction when actual organizational capabilities don't align with AI-generated expectations

- Strategic option limitation as AI-mediated positioning constrains perceived organizational capabilities and market opportunities

Organizations that establish effective AI Visibility and Representation governance early can influence their institutional narrative development rather than responding to established misrepresentations. The difference between proactive positioning and reactive correction becomes more significant as AI-mediated decision systems mature and stakeholder reliance on AI research increases.

The irreversibility principle applies particularly to competitive positioning. Organizations that achieve strong AI Visibility and accurate representation early may establish sustainable advantages as AI systems reference their strong positioning in subsequent competitive analyses. Conversely, organizations that delay governance may find themselves systematically excluded from AI-generated opportunity assessments.

Establishing Executive Accountability for AI-Mediated Representation

Executive accountability for AI Visibility and Representation requires clear governance structures that address both the strategic and operational dimensions of organizational positioning within AI systems. This accountability cannot be delegated entirely to marketing or communications teams because it involves institutional positioning decisions that affect organizational strategy and competitive dynamics.

Effective governance begins with executive understanding of how AI systems currently represent the organization across different decision contexts. This requires systematic auditing of AI-generated summaries, competitive comparisons, and organizational characterizations across multiple AI platforms and query types.

The accountability framework should address several key areas:

Strategic Narrative Governance: Executives must ensure organizational messaging across all channels supports consistent institutional narrative that AI systems can synthesize accurately. This requires coordination between strategy, marketing, communications, and operations teams to maintain narrative coherence.

Competitive Positioning Oversight: Leadership must monitor how AI systems position the organization relative to competitors and ensure that positioning aligns with strategic direction and actual capabilities. This includes understanding which competitive frameworks AI systems use and whether those frameworks serve organizational strategic interests.

Stakeholder Impact Assessment: Executives should understand how AI-mediated representation affects different stakeholder relationships, from customer acquisition and partner development to investor relations and talent recruitment.

Information Source Management: Organizations must ensure that sources AI systems reference for organizational information are current, accurate, and strategically aligned. This requires ongoing coordination between communications, legal, and strategy functions.

The operational implementation of executive accountability includes:

- Regular AI representation auditing to understand current organizational positioning across AI systems

- Cross-functional coordination to ensure narrative consistency across all organizational communications

- Strategic positioning review to align AI representation with organizational strategic direction

- Stakeholder impact monitoring to understand how AI-mediated representation affects business relationships

Executive accountability also requires understanding the limitations of organizational control over AI representation. While organizations can influence their representation through strategic information management and narrative consistency, they cannot directly control AI system outputs. Effective governance focuses on influencing inputs and monitoring outputs rather than attempting deterministic control.

The measurement of accountability effectiveness should focus on strategic outcomes rather than tactical metrics. Success indicators include stakeholder relationship quality, competitive positioning accuracy, and alignment between AI-generated organizational summaries and strategic positioning rather than traditional marketing metrics like search rankings or content engagement.

This governance approach positions AI Visibility and Representation as an institutional capability rather than a marketing initiative, ensuring that organizational positioning within AI systems supports broader strategic objectives and stakeholder relationship management.

FAQ

What is AI Visibility and how does it differ from SEO?

AI Visibility is the governance of how organizations appear within AI-mediated decision systems, while SEO optimizes for search engine rankings. AI Visibility focuses on institutional representation in AI-generated summaries and recommendations rather than website traffic or search position improvements.

Why is this a board-level issue rather than a marketing responsibility?

AI systems increasingly mediate business decisions affecting all stakeholder relationships, from investor research to vendor selection. This institutional positioning requires strategic governance beyond marketing scope, involving cross-functional coordination and competitive positioning decisions that affect organizational strategy.

How do AI systems choose which companies to include in their responses?

AI systems select sources based on authority, relevance, and recency criteria. They synthesize information from trusted sources to create summaries, meaning organizations must establish presence across sources that AI systems reference regularly for their industry and capabilities.

What is representation risk and why does it matter?

Representation risk occurs when organizations are visible in AI systems but misrepresented or incompletely described. This matters because stakeholders increasingly rely on AI-generated summaries for initial research, making accurate representation critical for appropriate opportunity development and stakeholder relationships.

Can organizations control how AI systems represent them?

Organizations cannot directly control AI outputs but can influence representation through strategic information management, narrative consistency across sources, and ensuring AI systems have access to current, accurate organizational information. The focus should be on input influence rather than output control.

How does AI Visibility affect competitive positioning?

AI systems create competitive frameworks by comparing organizations within specific contexts. Companies may find themselves included or excluded from competitive analyses based on how AI systems categorize their capabilities and market positioning, affecting opportunity development and strategic positioning.

What are the risks of delaying AI Visibility governance?

AI systems compound representation patterns over time, making later corrections more complex. Organizations that delay governance may find themselves systematically excluded from AI-mediated opportunities or persistently misrepresented as AI systems reference previous characterizations.

How should organizations measure AI Visibility effectiveness?

Effectiveness should be measured through strategic outcomes like stakeholder relationship quality, competitive positioning accuracy, and alignment between AI-generated summaries and organizational strategy rather than traditional marketing metrics like traffic or rankings.

What resources are needed to implement AI Visibility governance?

Implementation requires cross-functional coordination between strategy, communications, marketing, and legal teams rather than significant new resources. The focus is on governance structure and narrative consistency rather than large-scale technology or content investments.

How often should organizations audit their AI representation?

Organizations should conduct systematic AI representation audits quarterly to understand current positioning and identify representation gaps or inaccuracies. More frequent monitoring may be appropriate during strategic transitions or competitive positioning changes.

What role does content strategy play in AI Visibility?

Content strategy supports AI Visibility by ensuring AI systems have access to current, strategically aligned organizational information. However, content is one input among many, and AI Visibility requires broader institutional narrative consistency beyond content optimization.

How does AI Visibility relate to traditional public relations?

AI Visibility extends traditional PR by focusing on how organizational narratives appear in AI-mediated contexts rather than just media coverage. It requires understanding how AI systems synthesize information from multiple sources to create organizational summaries and competitive comparisons.

References

[1] Ai Search Analytics A Roadmap To Ai Visibility In 2026 - https://www.wpfastestcache.com/blog/ai-search-analytics-a-roadmap-to-ai-visibility-in-2026/

[2] Ai Tech Trends Predictions 2026 - https://www.ibm.com/think/news/ai-tech-trends-predictions-2026

[3] Ai Visibility Tools Comparison 2026 - https://www.searchparty.com/blog/ai-visibility-tools-comparison-2026

[4] Ai Visibility Tools - https://wellows.com/blog/ai-visibility-tools/

[5] State Of Ai Search Optimization 2026 - https://www.growth-memo.com/p/state-of-ai-search-optimization-2026

[6] Marketing Trends - https://www.kantar.com/campaigns/marketing-trends

[7] Ai Visibility Tracking Small Teams Complete Guide - https://almcorp.com/blog/ai-visibility-tracking-small-teams-complete-guide/

[8] Brand Visibility In The Age Of Ai - https://mcfadyen.com/articles/brand-visibility-in-the-age-of-ai/

About the Author

Sergio D'Alberto is the founder of ABL (AI.BUSINESS.LIFE.), an AI strategy and adoption advisory. His work focuses on helping leadership teams navigate AI governance, visibility strategy, and responsible adoption.

Prior to founding ABL, Sergio spent 16 years at Microsoft, most recently in Azure Engineering.